AI consensus is a contrarian bet in 2026. Major technology companies are writing single-LLM clauses into employee contracts — one approved model, no alternatives. I built Seekrates AI on the opposite premise: ask five models the same question, publish only what they agree on.

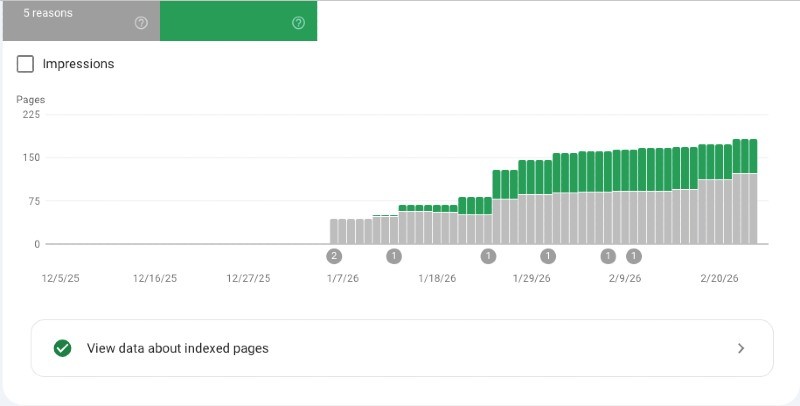

Then I published 201 posts built on that AI consensus methodology and watched what Google did with them.

Google indexed 84 of them. The other 117 were invisible — not penalised, not banned. Simply ignored.

This is what the audit found, and what I did about it.

The five reasons Google gave me

I opened Google Search Console, clicked Indexing, clicked Pages. A grey box and a green box. Grey: 121. Green: 84. Six reason codes underneath.

Crawled — currently not indexed: 68 pages. Google visited, read, and decided not worth including. A quality judgement.

Excluded by noindex tag: 40 pages. My own site telling Google to skip them — a default setting applied automatically.

Not found (404): 8 pages. Dead links Google’s crawler tried to visit and found nothing.

Page with redirect: 4 pages. Redirect chains confusing the crawler.

Server error: 1 page.

The first two categories account for 108 of the 121 missing pages. Everything else is rounding error.

The noindex investigation

About half the noindexed pages were WordPress structural artefacts — archive pages, pagination pages, tag listings. Rank Math noindexes these by default. Correctly.

But the other half were real content pages. Nineteen blog posts about prompt engineering, marked noindex, all invisible to Google.

I checked the WordPress database directly. A SQL query against the post metadata table. Result: one post had noindex explicitly set. The other eighteen showed “index” in their settings. So why was Google seeing them as noindexed?

Time. Google had last crawled those posts in October 2025 — four months earlier. A bulk setting had applied noindex during initial setup. The setting was later changed, but Google hadn’t returned to check.

Fix: Google Search Console URL Inspection tool, each URL submitted individually, requesting recrawl. No code changes. No plugin reconfiguration. Just asking Google to look again.

The 73 broken doors

The eight 404 errors in the indexing report were the visible tip. The Rank Math 404 Monitor showed the real problem: 73 URLs returning errors, accumulating 81 total crawler hits.

54 of the 73 were tag pagination pages WordPress generates automatically and never cleans up.

Fix: one regex redirect rule in Rank Math:

/tag/(.+)/page/[0-9]+/ → /tag/$1/

One rule. Forty seconds. 54 broken URLs resolved.

Six more regex rules handled category pagination. Fifteen individual redirects caught renamed posts and old slugs. Three bot probe entries deleted.

Total time: twenty minutes. Seventy-three broken doors, closed.

What to expect after the fix

Within 2–3 days: Rank Math redirection counter starts registering hits. 404 monitor stops accumulating new entries.

Within 5–7 days: Search Console 404 count begins falling. Indexed page count starts rising.

Within 2–3 weeks: Full picture visible. Either freed crawl budget allows Google to index more content — or the problem is content quality, not crawl efficiency, and the remedy is different.

The universality of 44%

This is not unique to seekrates-ai.com. It is a quiet reality of WordPress publishing that most site owners never investigate.

If you have published more than 50 posts, the odds are reasonable that Google is ignoring a meaningful fraction of them.

Go to Google Search Console. Click Indexing. Click Pages. Read the grey box. Then read the reasons underneath it.

The fix took 20 minutes of redirect work, five minutes of SQL investigation, and ten minutes of reindex requests. The hardest part was not the work itself. The hardest part was looking.

Why AI consensus changes the indexing equation

The industry trend toward single-LLM contracts is creating a content monoculture. Every organisation using the same model, asking the same questions, producing structurally similar answers.

Google’s quality judgement is partly a uniqueness signal. Content built on AI consensus — where five models are asked the same question and only the consensus survives — produces answers structurally different from single-model output. The disagreements between models are visible. The confidence levels are explicit. The methodology is documented.

That is what the 84 pages that passed Google’s quality judgement have in common. Not longer content. Not more keywords. Consensus-validated data that a single model could not produce alone.

The 44% problem has a technical fix. The reason the other 56% is worth fixing is that AI consensus content has something to say that the single-LLM monoculture cannot replicate.

Mohan Iyer is a retired industrial engineer based in New Zealand who builds AI-native development tools. Seekrates AI queries five leading language models simultaneously and publishes only consensus-validated results. Browse 201 posts at seekrates-ai.com.

Related posts:

– How do multiple AIs reach agreement through the consensus process? → seekrates-ai.com/multiple-reach-agreement/

– What is AI Consensus and how does multi-LLM decision making work? → seekrates-ai.com/consensus-does-multi-llm/

– How does AI consensus help with hallucination detection? → seekrates-ai.com/does-consensus-help/