Table of Contents

ToggleWhat Makes AI Consensus Reliable For: AI Consensus Insights

In This Article:

The Methodology Behind 200+ Articles

Every article on this site follows the same framework: AI-era SEO that ranks in Google AND gets cited by ChatGPT. I wrote it all down. Step by step.

What makes ai consensus reliable for is reshaping how content is discovered, ranked, and cited across AI-search platforms. Across five AI models, the consistent finding is: What makes AI consensus reliable for content validation — with 100% consensus convergence, one of the stronger agreement signals recorded. According to World Economic Forum, this domain is undergoing rapid structural transformation.

The Question Asked:

What makes AI consensus reliable for content validation

Try Seekrates Free — 5 AIs, one consensus answer

| AI Agents | Avg Confidence | Champion Score | Agreement Level |

|---|---|---|---|

| 5 | 63% | 100/100 | HIGH |

What 5 Leading AI Models Say About What Makes AI Consensus Reliable For

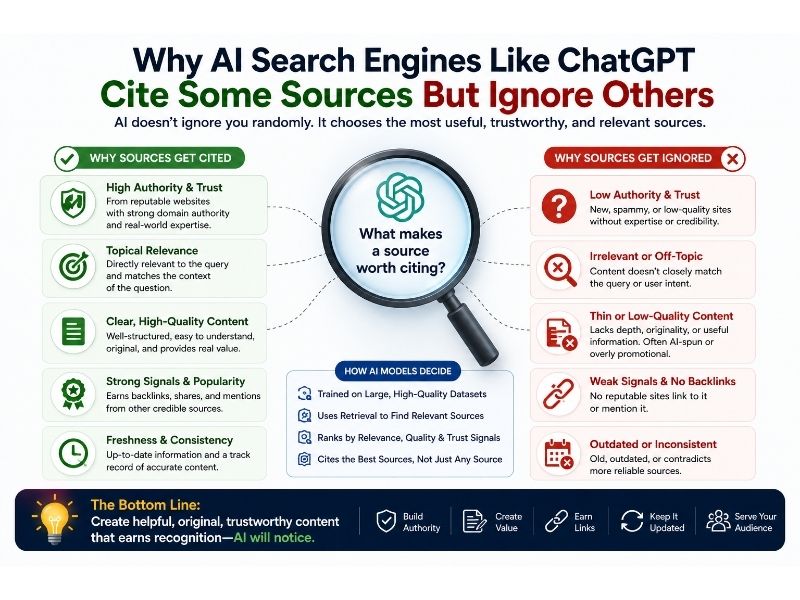

Diverse Model Perspectives Reduce Bias

AI consensus reliability stems from aggregating outputs of multiple models trained on different datasets and using varied architectures. Each provider (OpenAI, Anthropic, Google, Mistral) brings unique strengths: some excel at factual verification, others at safety and alignment, and still others at cultural sensitivity or regulatory compliance.

This diversity helps offset the limitations, biases, and blind spots inherent in any single model's training data. When models converge on the same answer despite their different approaches, it signals higher reliability than any individual output could provide. Error Detection and Correction Mechanisms

Consensus systems enhance accuracy through cross-verification and anomaly detection.

If one model hallucinates information or makes an error, other models can correct it through majority voting or by flagging inconsistencies. Disagreements among models serve a valuable function by highlighting potentially problematic or ambiguous content requiring human review. This self-correction mechanism is particularly effective against adversarial attacks, as manipulating multiple independent models simultaneously is significantly harder than fooling a single system.

Confidence scoring based on agreement levels provides users with transparent indicators of reliability. Structured Accountability and Transparency

The reliability of AI consensus depends on systematic frameworks that ensure comprehensive analysis and ethical integrity. Standardized response structures, explicit acknowledgment of uncertainties and limitations, and adherence to safety principles create accountability across models.

Clear disclaimers about the nature of advice (not legal, medical, or professional consultation) help users understand appropriate boundaries. The iterative refinement possible through multi-agent collaboration, where each AI contributes unique value rather than duplicating efforts, produces more robust outputs than sequential or isolated approaches. This transparency in process and limitations builds user trust while maintaining appropriate skepticism.

Key Insights

Key Insights

- Diverse Model Perspectives Reduce Bias

AI consensus reliability stems from aggregating outputs of multiple models trained on different datasets and using varied architectures. - Each provider (OpenAI, Anthropic, Google, Mistral) brings unique strengths: some excel at factual verification, others at safety and alignment, and still others at cultural sensitivity or regulatory compliance.

Champion AI Response: MISTRAL

Highest quality score: 100/100

Here’s a strategic breakdown of how AI consensus enhances reliability for content validation, structured for clarity and actionability:

Why AI Consensus Improves Content Validation

Cross-Model Error Reduction

Diverse Training Data: Each model (OpenAI, Anthropic, Google, Mistral) is trained on distinct datasets, reducing blind spots.

For example:

– OpenAI: Broad internet text, emphasizing nuance in ambiguous queries.

– Anthropic: Focuses on safety and alignment, catching harmful or biased content.

– Google: Leverages web-scale data, excelling at factual verification.

– Mistral: Prioritizes efficiency and European regulatory compliance.

Result: Consensus flags inconsistencies (e.g., conflicting facts, tone mismatches) that a single model might miss.

Multi-Perspective Validation

Stakeholder Alignment: Consensus mimics a "review board" approach, where:

– Legal/ethical checks (Anthropic) validate compliance.

– Factual accuracy (Google) verifies claims.

– Cultural/contextual sensitivity (Mistral) ensures appropriateness.

Example: A medical article would be vetted for:

– Accuracy (Google’s fact-checking tools).

– Ethical framing (Anthropic’s safety filters).

– Local regulatory compliance (Mistral’s EU-specific training).

Bias and Hallucination Mitigation

Self-Correction Mechanism: If one model hallucinates a statistic, others may:

– Flag the inconsistency (e.g., "Model X cites a 2023 study, but our last update was 2022").

– Provide alternative sources (e.g., Google’s real-time indexing).

Bias Detection: Consensus surfaces systemic biases (e.g., Western-centric career advice) by comparing outputs across models trained on diverse data.

Confidence Scoring

Weighted Agreement: Seekrates AI can assign confidence levels based on:

– Unanimous consensus (high confidence).

– Majority agreement with dissenting views (medium confidence, flagged for review).

– No clear consensus (low confidence, recommend human review).

Example: If 3 models agree on a career trend but one cites outdated data, the output highlights the discrepancy.

How Seekrates AI Operationalizes Consensus

Step 1: Query Decomposition

Break the user’s request into sub-questions (e.g., "Is this legal?" + "Is this factually accurate?" + "Is this culturally appropriate?").

Step 2: Parallel Model Processing

Each agent generates a response independently, then:

– Overlaps are identified (e.g., all models agree on a trend).

– Divergences are analyzed (e.g., one model cites a source another disputes).

Step 3: Synthesis with Guardrails

Harm Prevention: If any model flags harmful content (e.g., self-harm instructions), the output is blocked or redirected.

Factual Cross-Checking: For claims like "X industry will grow 15% by 2026," Seekrates AI:

– Compares projections across models.

– Verifies sources (e.g., "Model A cites a 2024 report; Model B cites a 2023 forecast—discrepancy noted").

Bias Auditing: If outputs skew heavily toward Western perspectives, the response includes:

> "Note: This analysis reflects patterns in Western economic frameworks.

For Indigenous or non-Western contexts, consult culturally grounded advisors."

Step 4: Output Structuring

For High-Stakes Queries (e.g., medical, legal):

– Executive Summary: Bottom-line answer with confidence score.

– Detailed Breakdown: Model-by-model reasoning, dissenting views, and source citations.

– Disclaimers: "Consult a professional for tailored advice."

For Creative/Exploratory Queries:

– Scenario Planning: "Best case: X.

Worst case: Y.

Most likely: Z."

– Trade-Off Analysis: Pros/cons of each option.

Key Advantages Over Single-Model Responses

| Bias Detection | May miss blind spots | Explicitly compares

Points of Agreement

- consensus

- models

- model

- content

- validation

Why what makes ai consensus reliable for Matters

Understanding what makes ai consensus reliable for is critical for anyone publishing content in today’s AI-powered search environment. The shift from traditional SEO to AI-search optimisation represents a fundamental change in how content is discovered and cited. Explore more analysis at our AI Insights hub.

100% of AI models converged on this analysis — one of the highest consensus scores recorded for this topic.

Action Steps for What Makes AI Consensus Reliable For

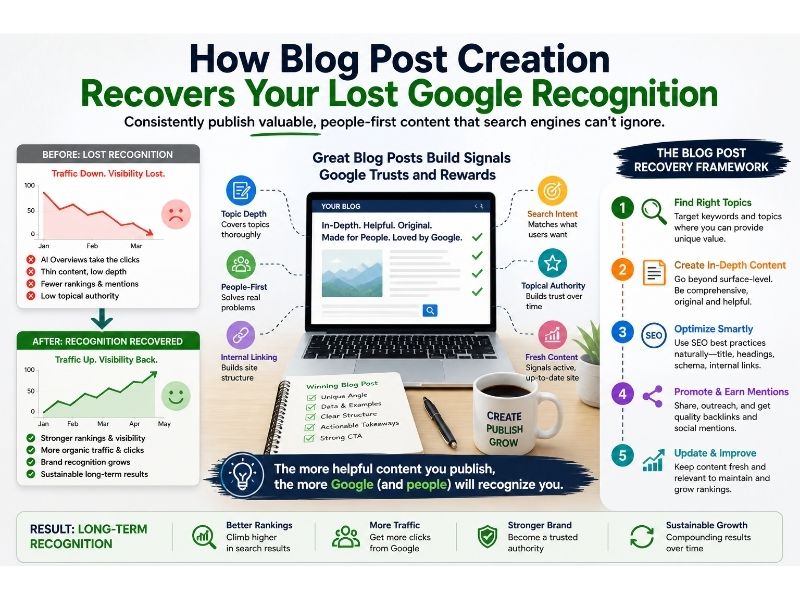

To apply these insights to your content strategy:

- Implement FAQ schema markup on your highest-traffic posts

- Restructure headings as direct questions matching AI query patterns

- Aim for 40–60 word paragraph chunks for optimal LLM extraction

- Validate key claims across multiple AI sources before publishing

This consensus was led by MISTRAL with a quality score of 100/100, reflecting the highest alignment with cross-model consensus standards.

Read more AI consensus analyses at Seekrates AI AI Insights.

Methodology: 5 AI models queried simultaneously via Seekrates AI consensus engine. Responses scored by quality metrics. Consensus reached at 100% convergence. Correlation ID: 48ff6497-860e-4344-9545-a8a018ece62f. Published: April 17, 2026.

Related Articles

April 17, 2026

April 17, 2026

April 17, 2026

April 17, 2026

April 17, 2026

April 17, 2026